What if you opened a travel app today and said, "Plan my trip to Jeju Island next month"? By 2026, the idea of AI autonomously handling flight searches, accommodation comparisons, and even payments won't be a mere experimental demo, but a widely adopted service.

MIT Technology Review defines AI in 2026 not as 'chatbots responding to prompts,' but as `Agentic AI`. This means AI will receive a goal, then autonomously break it down into steps, select the necessary tools, and execute the tasks. If we've traditionally used AI as a 'tool for asking questions,' this marks a turning point where we'll view it as an 'entity to delegate tasks to.'

From that moment on, not only convenience but also the standards of responsibility, the cost of errors, and the role of oversight will fundamentally change.

AI Beyond the Screen — What's Happening in Industrial Settings

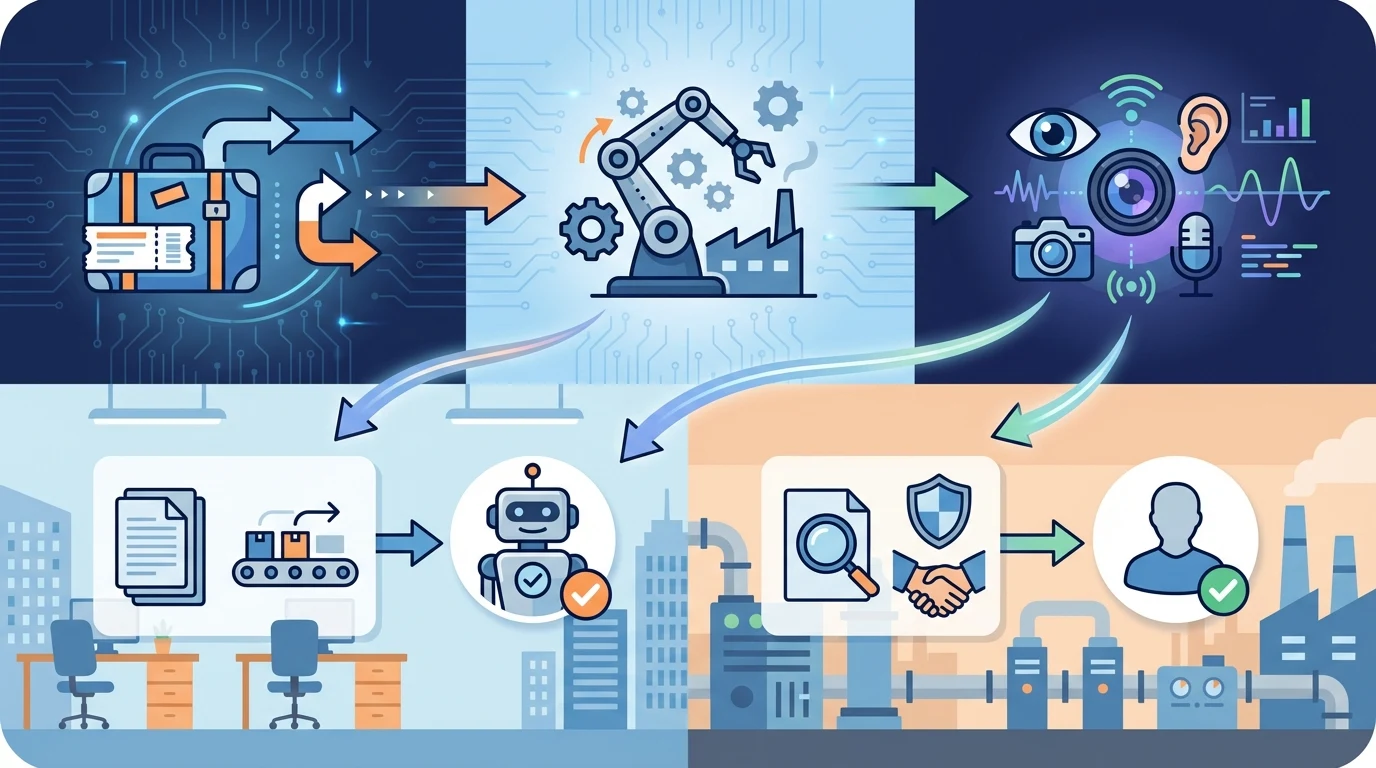

One of the key trends for 2026 highlighted by SK Telecom is `Physical AI`. This signifies the full-scale adoption of combining AI brains with robots to handle repetitive or hazardous tasks in fields like logistics, manufacturing, construction, and agriculture, without human intervention.

If that's hard to visualize, consider this: In recent years, industrial sensor prices have dropped, AI has learned millions of tasks through simulation, and generative AI is now enhancing its ability to handle exceptions. These three factors converging are bringing AI closer to being "ready for real-world deployment."

For field managers, while routine tasks become automated, roles such as responding to exceptional situations, quality control, and safety oversight will become more significant.

When AI Gains Eyes and Ears, the Interface Itself Changes

BJC Journal predicts that multimodal AI will become the de facto standard by 2026. Models capable of processing not just text, but also voice, images, video, and sensor data simultaneously will become widespread. The mode of interaction is also expected to shift from typing-centric to speaking and showing.

LG AI Research's `EXAONE 4.5`, unveiled at MWC 2026, is also an extension of this trend. The ability to combine language and visual intelligence to interpret broader contexts is emerging as a core competitive advantage. While this may seem to lower the barrier to entry for users interacting with AI, it also means the scope of information AI collects and processes will significantly expand.

Job Anxiety: What Parts Are Truly Realistic?

It's a natural reaction for job concerns to arise first when encountering AI news. The increasing pressure for automation, particularly in low-skilled, repetitive tasks, and the potential for deepened social inequality are clearly identified risks.

However, what's actually unfolding is less about "total replacement" and more about tasks being decomposed and reassembled. While customer service, document organization, basic analysis, and booking/payment processes will see faster automation, tasks requiring exception handling, clarifying responsibility, and trust management will actually demand more human involvement.

For businesses, what's crucial is not just adoption, but drawing clear lines. Without proper planning for which tasks to delegate to AI and which to keep for humans, costs will rise and results will be delayed. The rapidly increasing investment in AI, coupled with the time it takes to see real business outcomes, is precisely where concerns about an 'AI bubble' originate.

The Year Regulation Catches Up with Technology — What Changes in 2026

2026 marks the full implementation of the European Union's AI Act. South Korea is also moving towards more clearly defined corporate responsibilities through its `Basic AI Act`. LG AI Research's ethics and accountability report, which prioritizes safety and trustworthiness, aligns with this global trend.

As AI increasingly becomes part of social infrastructure, bias, misinformation, and privacy breaches transform from mere technical side effects into tangible operational risks. The broader the scope of autonomous decision-making and execution for agentic AI, the greater the cost of control failures.

Note: Going forward, logs, auditing systems, and clear lines of responsibility may become more critical criteria for corporate competitiveness than mere performance demonstrations. An environment is emerging where verifiable AI, rather than just convenient AI, will prove more sustainable.

The Inside Story of the AI Race — Power, Chips, and Sovereignty

According to Deloitte's analysis of the 2026 AI computing landscape, approximately two-thirds of the total computing power is projected to be used not for training new models, but for `inference` – running existing models at scale. This means that infrastructure capable of running these models in real-time is as crucial as developing good models themselves. This is also why hyper-scale data centers, with tens to hundreds of thousands of GPUs operating continuously, will be necessary.

Adding to this is the global competition for AI sovereignty. With projections (cited by Deloitte and BJC Journal) indicating that regions outside the US and China are likely to invest over $100 billion in their own AI computing infrastructure, the sentiment that 'relying solely on borrowed AI is insufficient' is solidifying at the government level in various countries.

However, this competition also has its downsides. It risks fragmenting the technological ecosystem and brings side effects like increased energy consumption and over-investment in infrastructure. The long-term criterion will be who can operate sustainably, rather than who grows fastest.

What Individuals and Businesses Should Prioritize Now

This transformation isn't just a concern for developers. It directly impacts office workers with repetitive tasks, field managers, SMEs considering AI adoption, and marketers or planners who frequently need to assess information reliability.

Individuals should start by identifying repetitive and routine tasks in their work. Then, determine whether AI should only handle the initial draft or execute the task completely. The ability to judge how much to delegate and where to draw the line will be a more crucial skill than simply knowing how to use AI.

Businesses should first check three things: Are there genuinely suitable tasks for automation? Is there a person responsible for reviewing the results and taking accountability? Are there internal standards to address personal information, misinformation, and bias? Rushing into adoption without these three considerations could leave businesses vulnerable to the pitfalls of the AI bubble.

Conclusion

The AI transformation in 2026 isn't about a single, transcendent model suddenly appearing. It's a confluence of agentic AI, physical AI, multimodal AI, alongside the evolving infrastructure and regulations surrounding them, all becoming a tangible reality.

Therefore, the real question is simple. It's not 'How amazing will AI become?' but 'How much will I delegate, and what will I remain fully responsible for?' Individuals and organizations that first establish these boundaries will be best equipped to navigate this rapid evolution.